Kate Styer

Overview

Keeper is a browser extension for social media that identifies harmful language and makes community guidelines easily accessible.

Challenge

How might we mitigate incivility and conflict on social media by redesigning it through the lens of restorative justice?

Role

Ideation, User Research, User Experience Design, Visual Design, Prototyping, Usability Testing

Context

Master's Thesis, School of Visual Arts, September 2018 - May 2019

Advisors

Dalit Shalom, Senior Product Designer, R&D Lab, The New York Times; Eric Forman, Faculty and Head of Innovation, School of Visual Arts; Graham Letorney, Faculty, School of Visual Arts

How It Works

Leveraging existing machine learning technology that analyzes toxic language, Keeper helps us reconsider our words in real-time. In addition, Keeper makes information about behavior and speech standards established by platforms easy to access.

Easy access to platform community guidelines

Keeper makes the platform's community standards easily accessible, inserting an access point to them on your screen as you scroll.

By reframing the standards as Community Agreements, Keeper helps remind you that when you are using the service, your are agreeing to a certain standard of behavior.

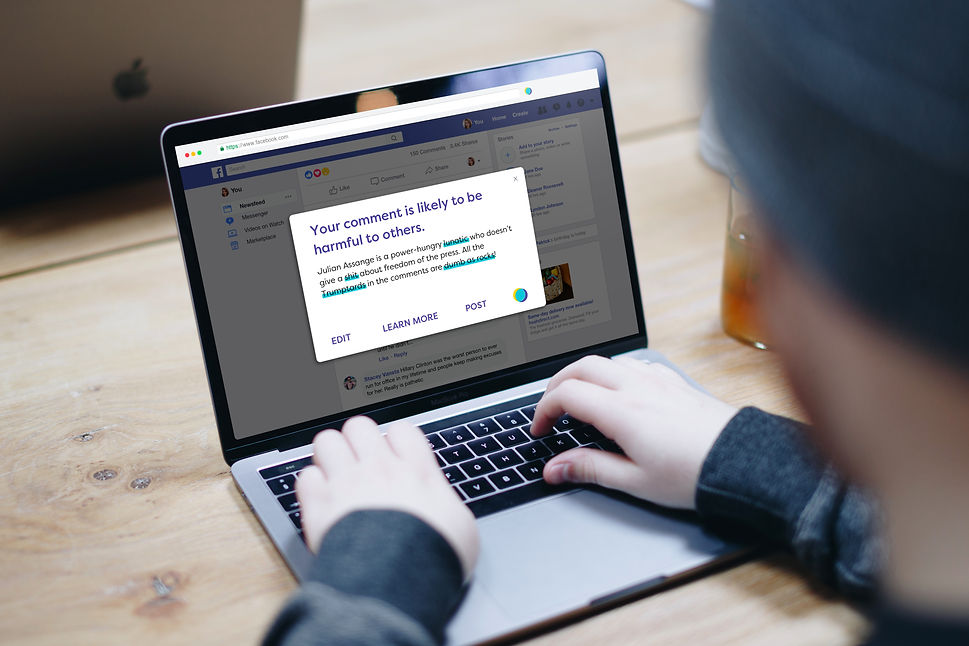

Identifies potentially harmful language

When you comment on a post, Keeper takes you out of the Facebook environment, separating you from the noise of other comments and incoming notifications. Using existing machine learning technology, it then identifies potentially harmful language in your comments, and gives you the opportunity to revise what you wrote.

.png)

Creates more opportunities for empathy

Keeper helps you review your comments when you are replying directly to another user as well. When you are writing your comment, it inserts biographical information about the person you are replying to, aiming to conjure empathy or feelings of connection towards this person, especially if you disagree.

User Research

Methodology

My primary and secondary research was focused on understanding:

-

What leads to incivility and conflict in digital conversational spaces like social media?

-

What strategies are currently deployed by platforms and communities in response to or as preventive measures?

I spoke to several online community moderators and members, from a variety of platforms and subject areas. For each interview, I created a discussion guide and took notes as we talked.

Research Synthesis

Immediately following each interview, I read through my notes and highlighted common or unexpected responses. After transferring each highlight to a post-it note, I created an affinity map for each interview. When I had completed five interviews, I grouped my individual maps into one large map. I narrowed all of my insights down to three primary categories of information that best encapsulated what I had discovered. I used these three categories to define three key design challenges:

Justice

Online communities apply commonplace offline understandings of justice in response to violations of community guidelines. Both default to removing bad actors from the community.

Governance

The communities that have the most success managing and preventing toxic behavior have very clearly stated and strongly enforced community guidelines.

Anonymity & Invisibility

The anonymity and invisibility afforded by the internet is one of the key enablers of toxic behavior.

Prototyping

Prototype 1: Restorative Justice on Slack

I have long been an advocate of a model of justice called restorative justice, which focuses on healing rather than punishment when a harmful incident occurs between two people or within a community. It’s similar to conflict resolution through mediation, and is commonly used in schools and the criminal justice system. In many cases, it serves as an antidote to existing systems that are no longer fairly serving the people who use them.

Restorative justice can be implemented through the practice of restorative circles, highly structured group conversations, as a regular relationship building tool or in response to conflict.

I set out to simulate a restorative circle in an online conversational environment. I created a framework for a moderated discussion in a private Slack channel. I invited four classmates to participate in the discussion. I built the following four basic components of a restorative circle into the discussion framework: Code of conduct, an established Circle Keeper, a circular meeting formation, and the use of a talking piece.

Circle Keeper & Code of Conduct

I established myself as the discussion moderator. In my experimental discussion environment, this meant that I was responsible for enforcing the discussion instructions.

To simulate the code of conduct, I wrote and pinned a message outlining the instructions for participating in the discussion.

Circular meeting formation

Typically, the direction of discussion in a restorative circle moves clockwise or counterclockwise around the circle. The implementation of a speaking order attempted to achieve this, by making sure every person had a chance to speak, and that they spoke only when it was their turn.

Talking Piece

Since the environment in Slack makes it impossible to prevent someone from speaking when they want, the only way to simulate the talking piece was to clearly enforce the established speaking order, and rely on the group to adhere to it. In addition, I used a directive that each participant should add a check mark emoji at the end of the message, to indicate when they are done speaking.

Insights

“It made me pay more attention to people's comments and feel listened to.”

This feeling seemed to occur primarily because of the speaking order and talking piece mechanisms, which ensured that no one spoke out of turn.

“It was a bit too slow While waiting for other people's answers, I was distracted.”

The digital conversational platforms we use like Slack and social media were built to increase efficiency and speed, which we’ve now come to expect from technology. But are those things helpful when we are trying to understand each other better or resolve conflicts?

What if, in order to be a part of an online community, you had to participate in a rigid, time-consuming process like a restorative circle-style discussion?

“Emojis were a fun, subtle way to respond, even though it wasn't in the instructions.”

Without being instructed, participants started using emoji responses on each other's messages to indicate their feelings about it. This wasn't surprising given the way emojis are used on Slack, but it suggested a solution to the discomfort of a rigid and unfamiliar conversation structure.

“Moderating is hard work.”

As the moderator, I felt a big responsibility to the participants in making sure it ran smoothly and produced the desired effect. Wait for each person to type their response, acknowledge their comment, and then refer back to the speaking order to determine who should speak next, was slower and more strenuous than I expected. I had planned for five questions, but ran out of time and ended up only asking 3.

Prototype 2: Restorative Justice on Disqus

Encouraged by the results of my Slack experiment, I decided to next explore whether a restorative justice approach to conflict resolution could also be adapted for online communities,

I reframed my challenge as: How might we mitigate conflict and harassment in online communities with a restorative justice approach to community guideline enforcement actions?

I studied platform procedures for responding to conflicts on Disqus, Twitter, Facebook, along with those of the platforms of the moderators I had interviewed. These were procedures designed into and supported by the platforms. I created a map of the typical milestones in the journey of one user breaking the community guidelines, another user reporting them, and the moderator reviewing the case, and determined where in that journey restorative justice could be used.

Service Mapping

In order to start visualizing what this would look like on an existing platform, I mapped out the process on Disqus, a web plugin that provides commenting sections for publishers. It also serves as an independent platform where users can create communities and participate in discussions. This helped me to identify risks, potential pain-points, and moments where the process could go off the rails.

Service Mock-up

I created a visual mock-up of the process within the existing design of Disqus. I designed around the following scenario and user archetypes: one who had been offended by another user’s comment, and the author of the offensive comment. I positioned this process alongside the other ways Disqus users can report comments.

Another option for reporting

In my proposal, when an offended user selects that they want to flag a comment as something they disagree with (top), they then are asked if they would like to participate in a “Restorative Channel” discussion with the person who wrote the comment.

Both users must be willing participants

The user who wrote the offensive comment then receives a notification that another user has requested a Restorative Channel discussion with them. That user can view the request, which includes their comment, the language highlighted by the other user, and the other user’s explanation for why they want to have a discussion. They can Accept, Ignore or Report the request.

Temperature check

Before the discussion takes place, both users must answer two questions, separately: How are you feeling about this comment now? What do you want the other user to understand about this comment? The channel is open for 24 hours, so both users must submit their answers and begin the discussion within that time frame. The time limit works to implore users to address and resolve their conflict sooner rather than later.

Structured and free discussion

Once both users have submitted their answers, their answers appear side by side, and they can now begin talking to each other freely. If things go awry, if one user gets abusive or no consensus is met, the other user can notify the moderator or leave the discussion. The moderator does not need to be present during the discussion.

Insights

“Going against human nature.”

When I presented this prototype to my class, one person commented that the overall process was “Going against human nature.” I wondered if that was true, or whether it was going against how we’ve learned to communicate on the internet, which has largely been informed by the design choices of platforms that grew too fast to notice the ways that people would use them for harm.

A literal adaptation

This prototype was a fairly literal adaptation of a restorative circle. As I was building it, the question kept occurring to me, does it have to be so literal? Could I instead design conversational spaces by injecting the essence of restorative justice, rather than trying to mimic it so closely?

Reframing the question

Why does restorative justice work in the physical world, and how can that be translated to the internet? In what ways do restorative justice principles already exist online, but have yet to be identified as such?

Pivot

Keeper, Version 1

After experimenting with these two prototypes and attending a restorative circle facilitation training, I concluded that the real impact of restorative circles isn’t the structure so much as the environment they create--intention, empathy is demanded/expected, being in-person. How could you recreate these qualities in an online space, and could they achieve the same effect as an in-person restorative circles?

I decided to pivot away from adapting restorative justice to injecting elements of restorative justice into existing designs of conversational platforms.

In its first iteration, Keeper was browser extension for Facebook that facilitated more civility and empathy in our conversations through four features:

Values Wall

Keeper asks users to make a selection of ten personal values from a predetermined list that are most important to them—things like family, honesty, or faith. It inserts a box at the top of their profile where these values are visible to other users, who might discover that they too value the same things.

Annotated Guidelines

Keeper makes Facebook’s community standards more easily accessible by inserting them directly into your home page. It also aims to make them easier to understand by annotating them and pulling out the information that is most relevant.

Conversation View

Rather than showing comments on a post in a vertical column of text, this view hides the text of the comments and shows only the profile picture of the author. It rearranges the user avatars into rings around the post being discussed, so it’s more like a conversation around a dinner table. When you hover over each avatar, an abbreviated profile window appears, with the users photo, values and comment.

Comment Review

When the feature is used, a notification will appear asking if you are sure you want to post something. The goal is to inject a short moment of reflection, which can sometimes make you reconsider certain words, the conversation itself and who you’re talking to.

User Testing

Methodology

For my first round of testing my Keeper prototype, I recruited 8 interview participants who identified as frequent commenters on Facebook. I created a discussion guide and conducted remote, moderated interviews with each of them where they engaged with my prototype and answered questions about their experience as they did so.

The goal of this research was to answer the following questions:

-

How likely are users to use this product?

-

Do users understand the overall benefit of the product?

-

What are the risks associated with the product?

To accomplish these goals, users engaged with my prototype and were prompted to imagine scenarios when they would use the product features and shared their immediate reactions, perceptions of value and likelihood of use.

Insights

Up for Review

User testing called two of four features into question, the Values Wall and Conversation View, as far as their perception of value. It also revealed to me that these features, especially the Values Wall, feel underdeveloped. Users expressed intrigue, but couldn’t immediately recognize what value the features could bring.

Shifting Accountability

The feedback to both the Annotated Community Standards and Comment Review features was solid enough that I felt comfortable saying my users found them to be valuable. One user noted that the Comment Review feature felt like it shifted the onus onto the user to take accountability for their actions, which is very much in line with my vision for this project. I thought this feature could go along well with the Annotated Community Standards, which in its own way threw the accountability back on the user.

Guardrails

My product, with the two features described above, would aim to be the guardrails that Facebook hasn’t been able to provide. Since I like metaphors, it would almost be more like training wheels, in the sense that it’s not necessarily dictating what users should do, but it’s guiding them towards making as objectively safe and thoughtful decisions as possible.

I decided to focus on developing and visually designing the two features, Annotated Community Standards and Comment Review.

Lessons Learned

Process

One lesson learned as a result of this project is that the good ideas and the right decisions don’t just hit you out of nowhere. I wanted them to, because it’s hard embracing uncertainty and swimming around in it indefinitely. It’s easier to imagine that things will just come to you one day if you think about them enough.

There were certain points in this process when I was so sure. And each time I thought I’d been hit with some clarity every couple of weeks, I ran with it. In retrospect, I don’t think it was clarity as I’ve known it. I wanted that feeling of certainty, of conviction so badly, I forced it into being. Perhaps that’s what clarity really is: in the face of uncertainty, a capitulation to what makes the most sense at that moment.

Keeper

The most harmful behavior we’ve seen occur on social media can be rooted in deep cultural norms created within communities over time, facilitated by how platforms have been designed. It can also be rooted in the messiness and complexity of being a human. Many of us have painful, difficult histories that color every minute of our every day, including our time spent online, whether we are able to acknowledge them or not. Platforms need to take responsibility for letting violent content persist and hateful communities grow, but we as users, as communities, as fellow human beings, need to also reflect on why some of us are doing so much harm.

Keeper is a tiny, imperfect offering for a massive problem. It explores what it could look like if social media was designed with our complexities and vulnerabilities in mind.